GPTLocalhost

Local LLM access & model switching

Free

Support platforms

web

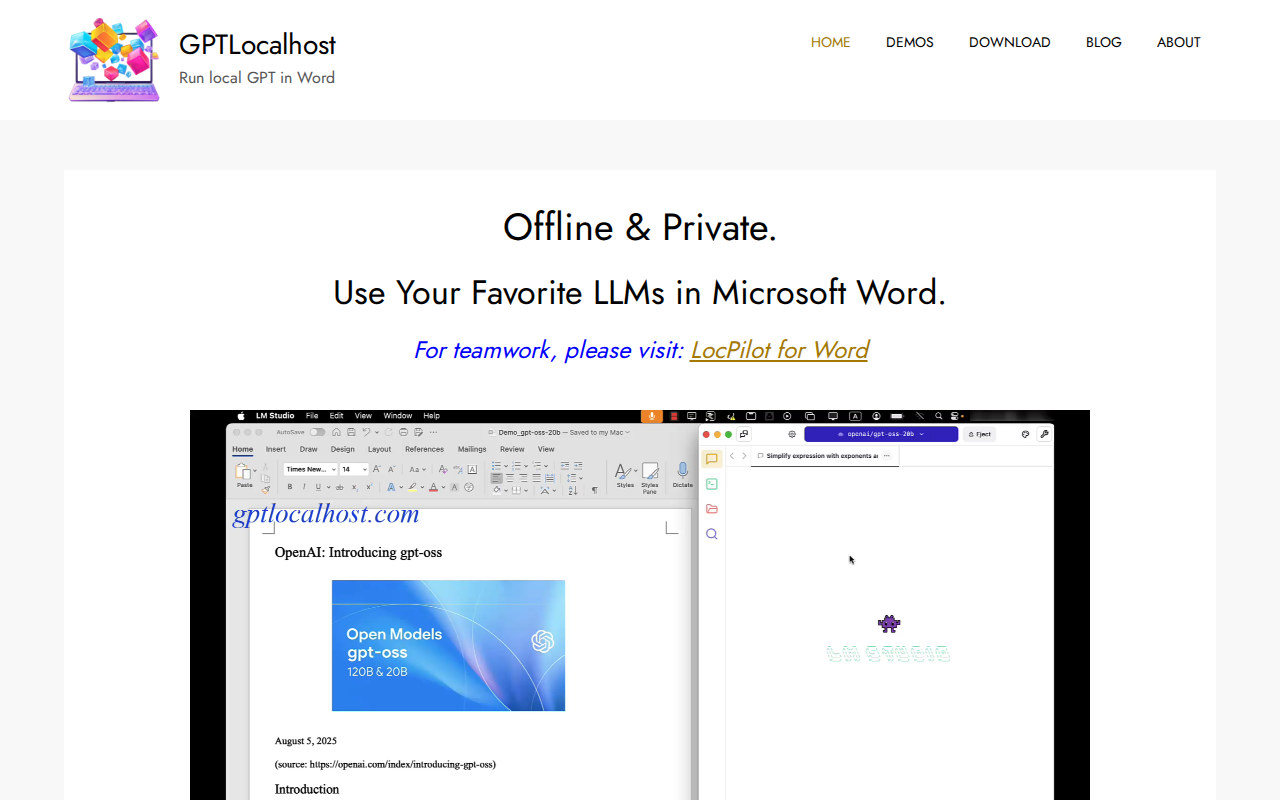

What is GPTLocalhost

GPTLocalhost provides a local environment for interacting with Large Language Models (LLMs). It allows users to run and experiment with various LLMs locally, offering flexibility and control over their AI interactions. Unlike cloud-based solutions, GPTLocalhost prioritizes privacy and reduces latency by keeping the models on your machine. The platform supports seamless model switching, enabling users to compare and contrast different LLMs, such as IBM Granite 4, for tasks like contract analysis. This is achieved through a user-friendly interface and straightforward installation process, making it accessible to both developers and researchers. The primary benefit is the ability to experiment with LLMs without relying on external APIs or incurring cloud costs, making it ideal for those prioritizing data privacy and rapid prototyping.

GPTLocalhost 's Core features

Local LLM Execution

GPTLocalhost allows running LLMs directly on your local machine, eliminating the need for cloud-based APIs. This significantly reduces latency, improves data privacy, and minimizes costs associated with external API calls. This is particularly beneficial for sensitive data or applications requiring real-time responses. The local execution leverages your machine's resources (CPU/GPU) for processing.

Seamless Model Switching

Easily switch between different LLMs, such as IBM Granite 4, to compare performance and suitability for various tasks. This feature is crucial for experimentation and selecting the optimal model for a specific use case. The platform provides a unified interface for interacting with different models, simplifying the evaluation process and enabling rapid prototyping.

Privacy-Focused Design

By running LLMs locally, GPTLocalhost ensures that your data remains private and secure. This is particularly important for applications handling sensitive information or complying with strict data privacy regulations. The local execution model eliminates the risk of data breaches or unauthorized access associated with cloud-based solutions.

User-Friendly Interface

GPTLocalhost offers a user-friendly interface for interacting with LLMs, making it accessible to both developers and non-technical users. The interface simplifies the process of submitting prompts, receiving responses, and managing different models. This ease of use accelerates the experimentation and development process.

Demo Availability

Provides readily available demos showcasing the capabilities of GPTLocalhost. These demos allow users to quickly understand the platform's functionality and potential use cases. The demos often include pre-configured setups and example prompts, enabling users to get started quickly without extensive configuration.

How to use GPTLocalhost

- Visit the GPTLocalhost website and review the available demos and documentation.,2. Identify the specific LLM you wish to use (e.g., IBM Granite 4) and ensure you have the necessary access or credentials.,3. Follow the installation instructions provided on the website to set up GPTLocalhost on your local machine.,4. Configure the chosen LLM within GPTLocalhost, providing any required API keys or model paths.,5. Use the provided interface or API to interact with the LLM, submitting prompts and receiving responses.,6. Experiment with different models by switching between them within the GPTLocalhost environment to compare performance.

Use cases of GPTLocalhost

Contract Analysis

Legal professionals can use GPTLocalhost with models like IBM Granite 4 to analyze contracts locally. They can upload documents, identify key clauses, and assess potential risks without sending sensitive data to external servers. This improves efficiency and ensures data privacy during contract review processes.

AI Research and Experimentation

Researchers can leverage GPTLocalhost to experiment with various LLMs, compare their performance, and fine-tune models for specific tasks. They can test different prompts, evaluate response quality, and iterate on their AI models in a controlled, local environment, accelerating the research cycle.

Local Development

Developers can integrate LLMs into their applications without relying on external APIs. They can build chatbots, content generation tools, and other AI-powered features that run locally, reducing latency and improving responsiveness. This approach is ideal for offline applications or those requiring high data security.

Data Privacy Compliance

Organizations handling sensitive data can use GPTLocalhost to comply with data privacy regulations. By running LLMs locally, they can ensure that data never leaves their secure environment, reducing the risk of data breaches and ensuring compliance with GDPR, HIPAA, and other regulations.

Who benefits from GPTLocalhost

AI Researchers

Researchers benefit from the ability to experiment with different LLMs locally, compare their performance, and fine-tune models without the constraints of cloud-based APIs. This allows for faster iteration and more control over the research process.

Developers

Developers can integrate LLMs into their applications with greater control over data privacy and latency. GPTLocalhost enables them to build AI-powered features that run locally, improving responsiveness and reducing reliance on external services.

Legal Professionals

Legal professionals can use GPTLocalhost to analyze contracts and other legal documents locally, ensuring data privacy and reducing the risk of data breaches. This improves efficiency and security during the contract review process.

Data Scientists

Data scientists can leverage GPTLocalhost to prototype and test LLM-based solutions in a secure and controlled environment. The ability to switch between models and run them locally accelerates the development and evaluation of AI applications.

Pricing of GPTLocalhost

Pricing details are not explicitly mentioned on the provided demo page. However, the nature of the product suggests a free or open-source model, as it focuses on local execution and model switching.