Langfa

AI-powered Language Model API

Freemium

Support platforms

web

What is Langfa

Langfa provides a robust API for interacting with large language models. It simplifies the process of integrating advanced AI capabilities into applications by offering a unified interface to various models. Unlike platforms that focus solely on model training or deployment, Langfa emphasizes ease of use and accessibility, abstracting away the complexities of model selection, management, and optimization. This allows developers to quickly experiment with different models, fine-tune prompts, and integrate AI features without deep expertise in machine learning. It's ideal for developers and businesses looking to add natural language processing, text generation, and other AI-driven functionalities to their products, improving user engagement and automating workflows.

Langfa 's Core features

Unified API for Multiple Models

Langfa offers a single API endpoint that supports various language models, including GPT-3.5, GPT-4, and others. This abstraction simplifies model selection and switching, allowing developers to easily experiment with different models without changing their code significantly. This unified approach reduces integration time and allows for dynamic model selection based on performance or cost considerations. The API automatically handles model-specific nuances, ensuring consistent results.

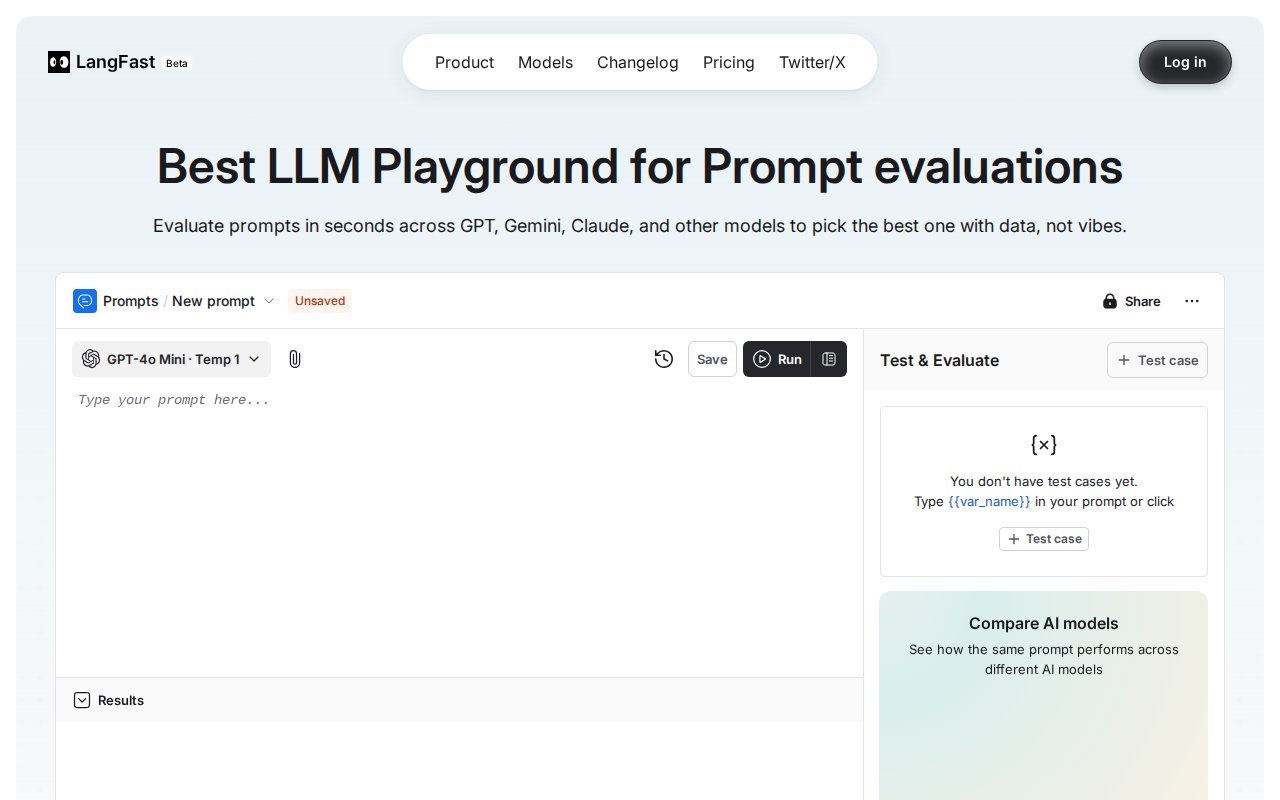

Prompt Engineering Tools

Provides built-in tools for prompt optimization and A/B testing. Users can create, save, and manage prompts within the Langfa platform. The platform allows for the comparison of different prompts' outputs, measuring metrics such as coherence, relevance, and sentiment scores. This feature helps users refine their prompts to achieve optimal results from the language models, improving the quality of generated content and user satisfaction.

Real-time Usage Analytics

Offers detailed analytics on API usage, including request counts, token usage, and latency metrics. The dashboard provides insights into which models are most frequently used, the performance of different prompts, and the overall cost associated with API calls. This data enables users to monitor their spending, identify potential bottlenecks, and optimize their usage patterns for cost efficiency and performance improvements.

Model Versioning and Rollback

Supports model versioning, allowing users to specify a particular model version for their API calls. This ensures consistency and reproducibility of results, even as models are updated. In case of unexpected behavior or performance degradation, users can easily roll back to a previous version. This feature is crucial for maintaining the stability of applications that rely on language models.

Rate Limiting and Cost Control

Implements rate limiting to prevent abuse and manage costs effectively. Users can set limits on the number of requests per minute or per day. The platform also provides cost estimation tools, allowing users to predict the expenses associated with their API usage based on the model, prompt length, and other parameters. This helps users stay within their budget and avoid unexpected charges.

How to use Langfa

- Sign up for a Langfa account at langfa.st to obtain an API key.,2. Install the Langfa Python client using

pip install langfa.,3. Import the Langfa client and initialize it with your API key:from langfa import Langfa; client = Langfa(api_key="YOUR_API_KEY").,4. Use theclient.generate()method, passing in a prompt and model parameters (e.g., model name, temperature):response = client.generate(prompt="Write a short story about a cat.", model="gpt-3.5-turbo", temperature=0.7).,5. Process the response:print(response.text)to display the generated text.,6. Explore advanced features like prompt engineering, model comparison, and usage analytics through the Langfa dashboard.

Use cases of Langfa

Content Generation

Content creators can use Langfa to generate articles, blog posts, and social media updates. They input a topic or brief, and the API generates text, saving time and effort. For example, a marketing team can quickly produce multiple variations of ad copy for A/B testing, improving campaign performance.

Chatbot Development

Developers can integrate Langfa into chatbots to enhance their conversational abilities. Users can build more engaging and responsive chatbots that understand and respond to complex user queries. This improves customer service and user experience, as the chatbot can handle a wider range of interactions.

Summarization and Text Analysis

Businesses can leverage Langfa to summarize long documents, extract key information, and perform sentiment analysis. Legal teams can use it to quickly review contracts, and financial analysts can summarize earnings reports. This improves efficiency and decision-making.

Code Generation and Documentation

Software developers can use Langfa to generate code snippets and documentation from natural language descriptions. They can describe the desired functionality, and the API generates the code, reducing development time and improving code quality. This is particularly useful for rapid prototyping and automating repetitive tasks.

Who benefits from Langfa

Software Developers

Developers benefit from Langfa's easy-to-integrate API, which allows them to quickly add AI-powered features to their applications. They can focus on building their core product instead of managing complex model integrations. This accelerates development cycles and reduces the need for specialized AI expertise.

Content Creators

Content creators can streamline their workflow by using Langfa to generate various types of content, from articles to social media posts. The platform's prompt engineering tools help them optimize content quality and tailor it to their specific needs, saving time and improving output.

Businesses

Businesses can improve customer service, automate content creation, and gain insights from data using Langfa. The API enables them to build chatbots, summarize documents, and perform sentiment analysis, leading to increased efficiency and better decision-making.

Researchers

Researchers can use Langfa to experiment with different language models and prompts for their studies. The platform's analytics and versioning features provide valuable data and ensure reproducibility, facilitating the research process and enabling more robust findings.

Pricing of Langfa

Free tier available with limited usage. Paid plans offer increased request limits, priority support, and access to advanced features. Specific pricing details are available on the website.