ONNX Runtime

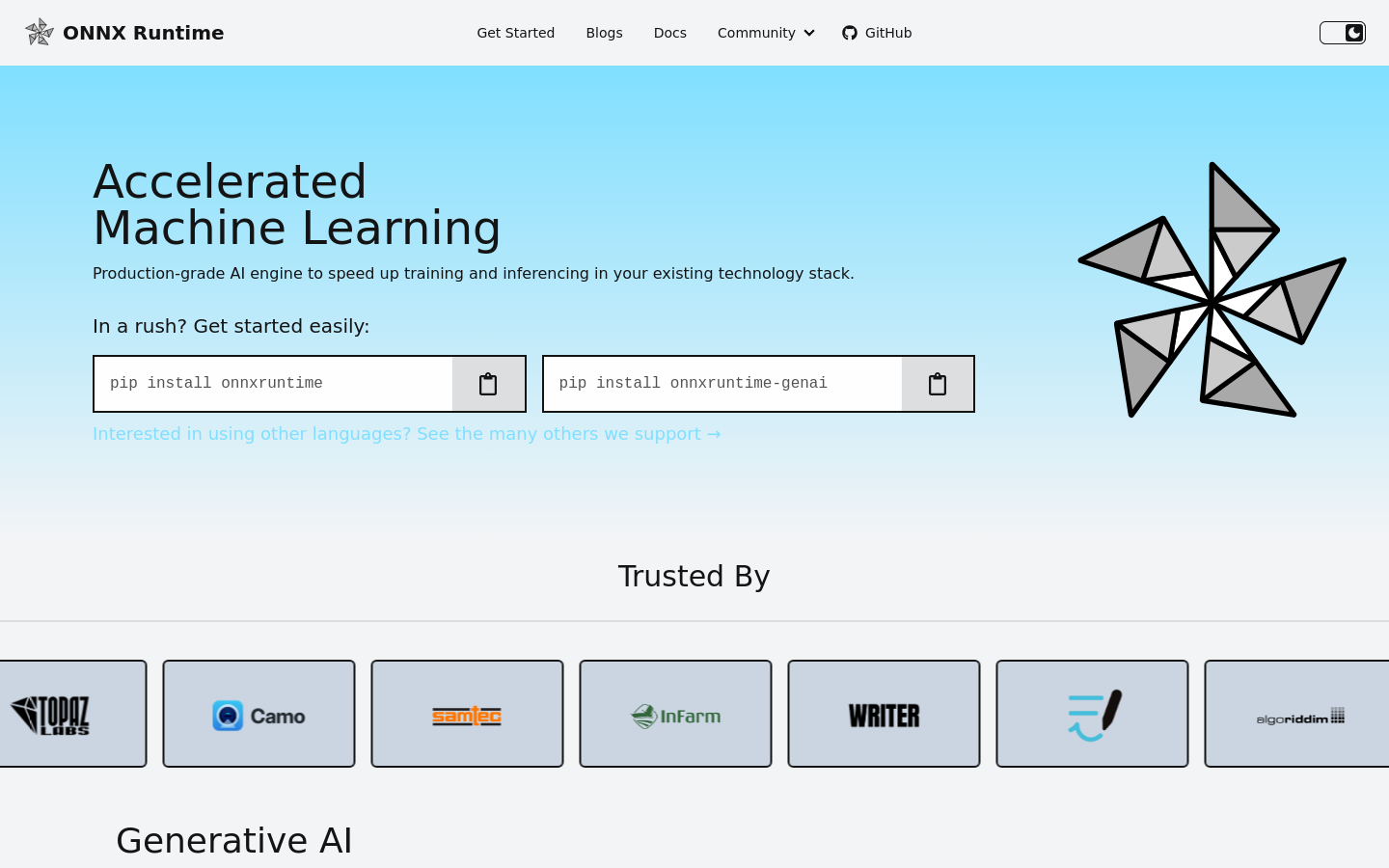

A cross-platform machine learning engine for high-performance model inference.

Freemium

Support platforms

web

What is ONNX Runtime

ONNX Runtime is a production-grade AI engine engineered to solve the common bottleneck of optimizing machine learning models for diverse hardware and software environments. By providing a unified interface for training and inference, it allows teams to deploy models across CPUs, GPUs, and NPUs without sacrificing performance. Whether you are working with Large Language Models (LLMs) or standard predictive models, this engine ensures that your applications maintain low latency and high throughput, regardless of the underlying infrastructure. Designed for flexibility, the runtime supports a wide array of programming languages—including Python, C#, C++, Java, JavaScript, and Rust—making it a versatile choice for complex technology stacks. It bridges the gap between development and production, enabling developers to maintain consistent model behavior across Linux, Windows, macOS, mobile platforms, and web browsers. By streamlining the execution of state-of-the-art models, it empowers engineers to focus on building intelligent features rather than troubleshooting hardware compatibility or performance degradation.

ONNX Runtime 's Core features

Hardware Acceleration

Optimizes performance for latency, throughput, and memory utilization across a wide range of hardware, including CPUs, GPUs, and NPUs, ensuring your models run efficiently on any device.

Cross-Platform Support

Provides robust compatibility across major operating systems like Linux, Windows, and macOS, as well as mobile platforms and web browsers, allowing for a truly portable AI strategy.

Multi-Language Support

Offers native integration for developers using Python, C#, C++, Java, JavaScript, and Rust, making it easy to incorporate high-performance AI into diverse and existing technology stacks.

Generative AI Integration

Enables the deployment of state-of-the-art Large Language Models, supporting advanced tasks like text generation and image synthesis directly within your production applications.

How to use ONNX Runtime

- Begin by installing the runtime package via your preferred package manager, such as 'pip install onnxruntime' or 'pip install onnxruntime-genai', to set up your environment.

- Initialize the runtime by passing the file path of your machine learning model into the 'InferenceSession' class, which prepares the engine to execute your specific model.

- Format your input data into the required tensor structure, ensuring it aligns with the model's expected input schema to prevent runtime errors during processing.

- Execute the model by calling the 'session.run' method with your prepared input data, which triggers the engine to generate predictions or outputs efficiently.

- Review the returned results from the session to seamlessly integrate the model's predictions into your existing application workflow or service logic.

Use cases of ONNX Runtime

Edge AI Deployment

Developers can deploy high-performance AI models on resource-constrained devices like mobile phones or IoT hardware by leveraging optimized runtime configurations.

Production Model Serving

Engineers can reliably serve machine learning models in production environments, ensuring that end-user applications benefit from low latency and high throughput.

Cross-Platform Application Development

Teams building applications for multiple platforms can use a single, unified runtime to maintain consistent AI performance across desktop, mobile, and web environments.

Who benefits from ONNX Runtime

Machine Learning Engineers

Professionals focused on optimizing model inference speed and resource efficiency to ensure their AI applications meet production-grade performance standards.

Software Developers

Developers integrating AI into applications across various languages who need a reliable, high-performance execution engine that fits into their existing stack.