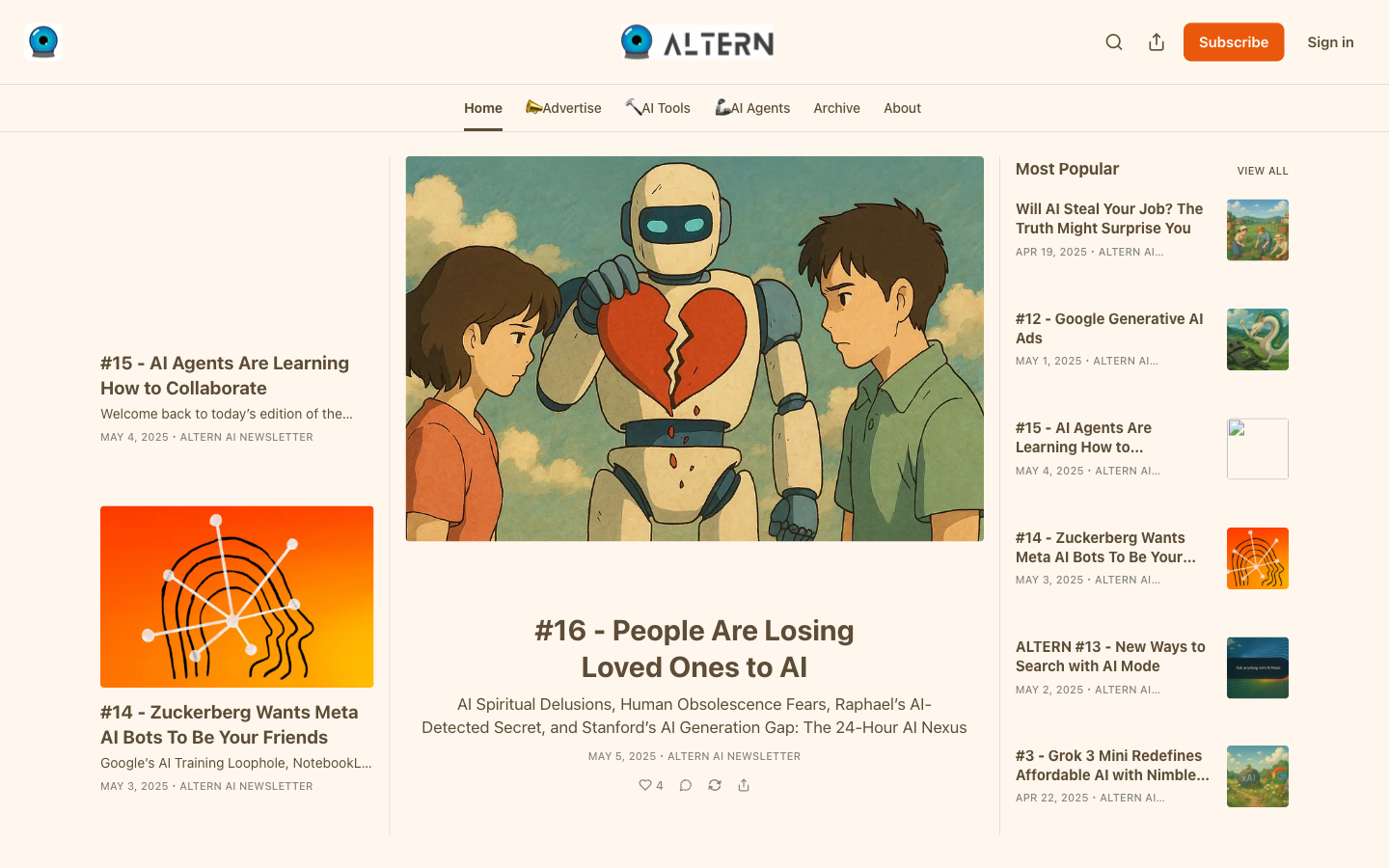

localbench

GGUF Quantization Benchmarks

Free

Support platforms

web

What is localbench

localbench provides rigorous, data-driven performance analysis for GGUF-formatted Large Language Models. Unlike generic benchmarks that rely on static datasets like Wikipedia, localbench evaluates model quality using KL divergence across 250,000 tokens of real-world task data. It specifically compares quantization outputs from major contributors like Unsloth and Bartowski, offering developers a transparent look at how different quantization methods impact model perplexity and reasoning capabilities. This tool is essential for engineers optimizing local LLM deployments who need to balance hardware constraints with output fidelity.

localbench 's Core features

KL Divergence Benchmarking

Uses Kullback-Leibler divergence to measure the statistical distance between the original FP16 model and the quantized GGUF version. This provides a mathematically rigorous metric for 'information loss' during quantization, far more accurate than simple perplexity scores for assessing how well a model retains its original reasoning capabilities after compression.

Real-World Task Evaluation

Benchmarks are conducted over 250,000 tokens of real-world, domain-specific tasks rather than standard academic datasets. This ensures the results reflect how models behave in actual production environments, such as code generation, summarization, and instruction following, rather than just testing for memorization of static text.

Uploader Comparative Analysis

Directly compares quantization outputs from different creators like Unsloth and Bartowski. This allows users to identify which quantization pipelines produce the most stable and high-fidelity GGUF files, helping developers avoid models that may have been degraded by suboptimal quantization parameters or conversion scripts.

Hardware-Aware Optimization

Focuses on the GGUF format, which is the industry standard for CPU/GPU hybrid inference. By providing clear data on how specific quantization levels perform on consumer-grade hardware, localbench helps developers maximize their context window and token throughput without exceeding their local VRAM limits.

Transparent Methodology

Provides full visibility into the testing pipeline. By documenting the exact token counts and task types used for evaluation, localbench allows for reproducible results, enabling the community to verify the quality of specific model uploads before committing to large downloads or production integration.

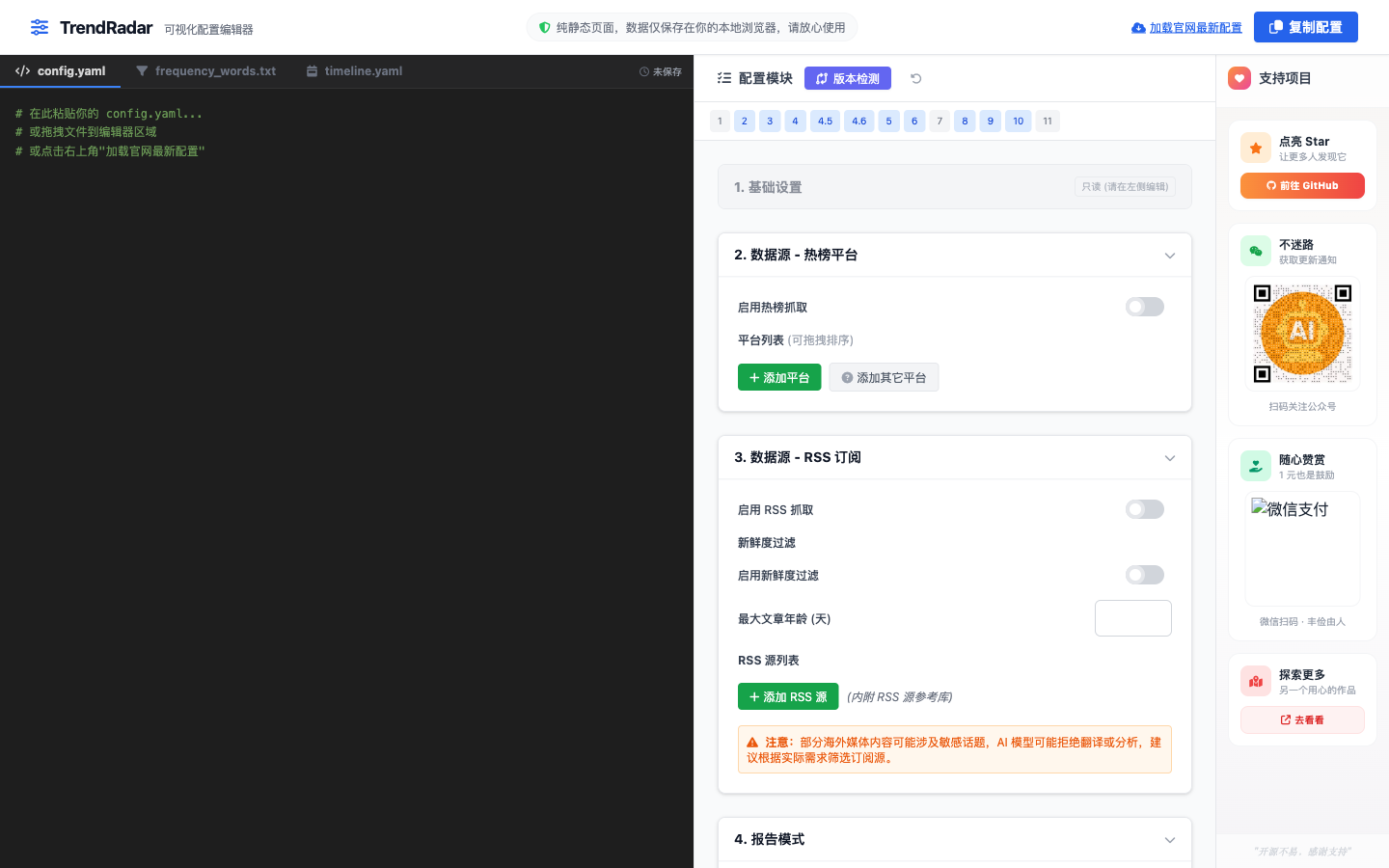

How to use localbench

- Navigate to the localbench Substack archive to access the latest quantization reports.,2. Identify the model architecture and quantization level (e.g., Q4_K_M, Q6_K) relevant to your hardware.,3. Review the KL divergence metrics to compare the accuracy loss between different uploaders.,4. Select the GGUF file that offers the optimal trade-off between VRAM usage and task-specific performance.,5. Download the chosen model file from the linked repository (e.g., HuggingFace) for use in your local inference engine.

Use cases of localbench

Optimizing Local LLM Inference

AI engineers building local RAG pipelines use localbench to select the highest-performing Q4 or Q5 quantization, ensuring they maintain high accuracy while fitting the model within 8GB or 16GB VRAM constraints.

Model Selection for Production

Developers choosing between multiple GGUF versions of the same model use the KL divergence data to verify which uploader provides the most reliable output, reducing the risk of unexpected model hallucinations.

Quantization Pipeline Validation

Researchers and model fine-tuners use the benchmarks to validate their own quantization scripts, comparing their results against established benchmarks to ensure their conversion process is not introducing unnecessary noise.

Who benefits from localbench

AI Infrastructure Engineers

Need to deploy LLMs on local hardware and require precise data on how quantization affects model output quality to ensure production-grade reliability.

Local LLM Enthusiasts

Power users running models like Llama 3 or Mistral locally who want to squeeze the best performance out of their consumer GPUs.

Model Quantizers

Creators who upload GGUF models to HuggingFace and want to verify the quality of their conversions against industry standards.

Pricing of localbench

The content is provided for free via the localbench Substack. No subscription is required to access the research and benchmark data.