Apache Airflow

Programmatic Workflow Orchestration

Free

Support platforms

web

What is Apache Airflow

Apache Airflow is a platform for programmatically authoring, scheduling, and monitoring workflows. It empowers users to define pipelines as Python code, providing flexibility and control over data processing tasks. Airflow's core value lies in its ability to orchestrate complex workflows, ensuring tasks run in the correct order and handle dependencies effectively. Unlike traditional cron jobs or scripting, Airflow offers a robust UI for monitoring, logging, and managing workflows at scale. Its modular architecture and extensive integrations with cloud providers and third-party services make it adaptable to diverse environments. Data engineers, data scientists, and DevOps teams benefit from Airflow's ability to automate and streamline data pipelines, machine learning model training, and infrastructure management.

Apache Airflow 's Core features

Python-Based Workflow Definition

Airflow uses Python for defining workflows (DAGs), allowing developers to leverage the full power of the Python ecosystem. This includes using standard Python features like loops, conditional statements, and date/time formats for scheduling. This approach provides flexibility and avoids the limitations of command-line or XML-based workflow definitions, enabling dynamic pipeline generation and complex logic implementation.

Scalable and Modular Architecture

Airflow's modular design allows it to scale to handle a large number of concurrent tasks. It uses a message queue (e.g., Celery, RabbitMQ) to orchestrate workers, enabling horizontal scaling by adding more worker nodes. This architecture ensures that Airflow can manage complex workflows with numerous dependencies and high data volumes, making it suitable for enterprise-level deployments.

Robust Web UI

Airflow provides a user-friendly web UI for monitoring, scheduling, and managing workflows. The UI offers real-time task status updates, detailed logs, and the ability to trigger, pause, and retry tasks. This centralized interface simplifies workflow management, provides insights into pipeline performance, and reduces the need for manual intervention, improving operational efficiency.

Extensive Integrations

Airflow offers a wide range of pre-built operators for integrating with various services, including Google Cloud Platform, Amazon Web Services, Microsoft Azure, and many third-party tools. These operators simplify the process of executing tasks on different platforms, reducing the need for custom scripting and accelerating the development of data pipelines and other workflows.

Dynamic Pipeline Generation

Airflow allows for dynamic pipeline generation through Python code. This means you can write code that instantiates pipelines dynamically, based on data or other parameters. This feature is particularly useful for handling recurring tasks, processing data from multiple sources, or creating pipelines that adapt to changing business requirements, enhancing flexibility and automation.

Open Source and Community Driven

Airflow is an open-source project with a vibrant community. This means that users can contribute to the project, share their experiences, and access a wealth of resources and support. The open-source nature fosters transparency, collaboration, and continuous improvement, ensuring that Airflow remains a powerful and adaptable workflow orchestration platform.

How to use Apache Airflow

- Install Airflow using pip:

pip install apache-airflow.,2. Initialize the Airflow database:airflow db init.,3. Create a DAG (Directed Acyclic Graph) in Python, defining your workflow tasks and dependencies.,4. Place your DAG file in thedagsfolder within your Airflow home directory.,5. Start the Airflow webserver and scheduler:airflow webserver -p 8080andairflow scheduler.,6. Access the Airflow UI in your browser (usually athttp://localhost:8080) to monitor and manage your workflows.

Use cases of Apache Airflow

Data Pipeline Automation

Data engineers use Airflow to automate the ETL (Extract, Transform, Load) process. They define DAGs to extract data from various sources, transform it using tools like Spark or Pandas, and load it into a data warehouse. This ensures data is consistently updated and ready for analysis, saving time and resources.

Machine Learning Model Training

Data scientists use Airflow to schedule and manage the training of machine learning models. They create DAGs to orchestrate data preprocessing, model training, evaluation, and deployment. This automates the ML lifecycle, ensuring models are retrained regularly with the latest data and deployed efficiently.

Infrastructure Management

DevOps engineers use Airflow to automate infrastructure tasks, such as provisioning servers, deploying applications, and managing cloud resources. They create DAGs to orchestrate these tasks, ensuring they are executed in the correct order and with the necessary dependencies, improving operational efficiency.

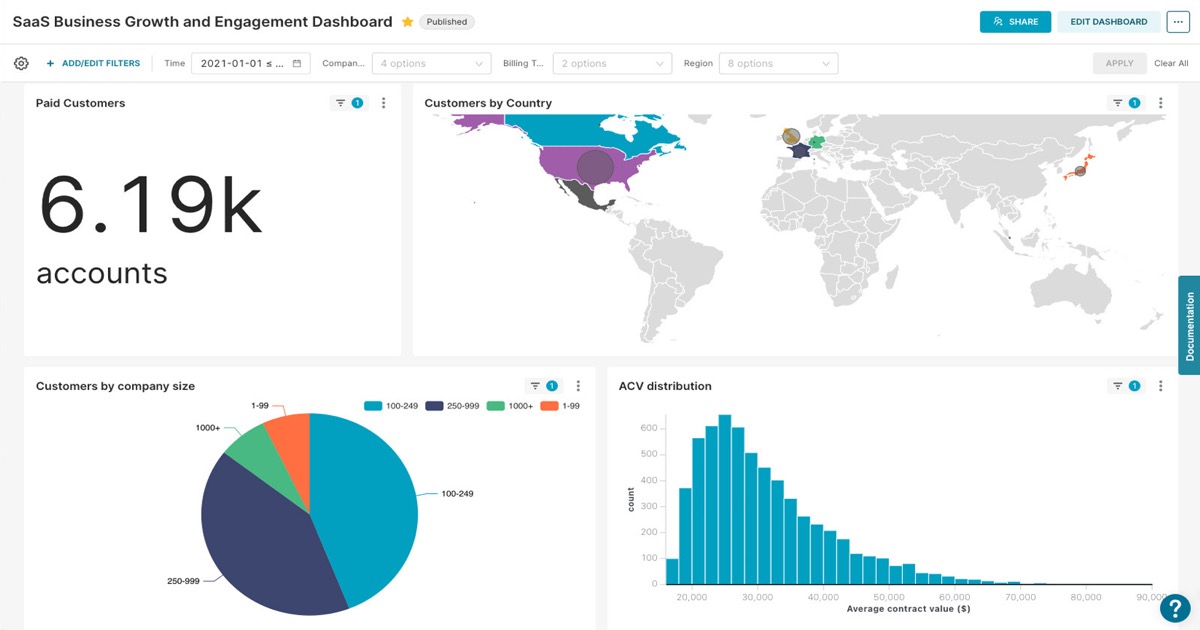

Reporting and Analytics

Analysts use Airflow to automate the generation of reports and dashboards. They define DAGs to extract data from databases, transform it, and generate reports using tools like SQL or Python scripts. This ensures that reports are generated on a regular schedule and provide up-to-date insights.

Who benefits from Apache Airflow

Data Engineers

Data engineers need Airflow to build and manage data pipelines. They use it to automate ETL processes, ensuring data is transformed and loaded efficiently. Airflow's scheduling and monitoring capabilities help them maintain data quality and reduce manual intervention.

Data Scientists

Data scientists use Airflow to automate the machine learning lifecycle. They create workflows for data preprocessing, model training, evaluation, and deployment. This allows them to focus on model development and analysis rather than manual orchestration.

DevOps Engineers

DevOps engineers use Airflow to automate infrastructure tasks. They create workflows for provisioning servers, deploying applications, and managing cloud resources. This improves operational efficiency and reduces the risk of errors.

Business Analysts

Business analysts use Airflow to automate reporting and analytics processes. They create workflows to extract data, transform it, and generate reports. This ensures that reports are generated on a regular schedule and provide up-to-date insights.

Pricing of Apache Airflow

Apache License 2.0 (Open Source). Free to use, modify, and distribute.