AngelSlim

Efficient Model Deployment for Large Language Models

Verdict

Best for Deploying large language models on resource-constrained devices

AngelSlim is a large language model compression toolkit designed to help developers efficiently deploy and compress large language models

Best for

Machine learning engineers and researchers

Key Features

Quantization

Speculative Decoding

Pruning

Actions

Share

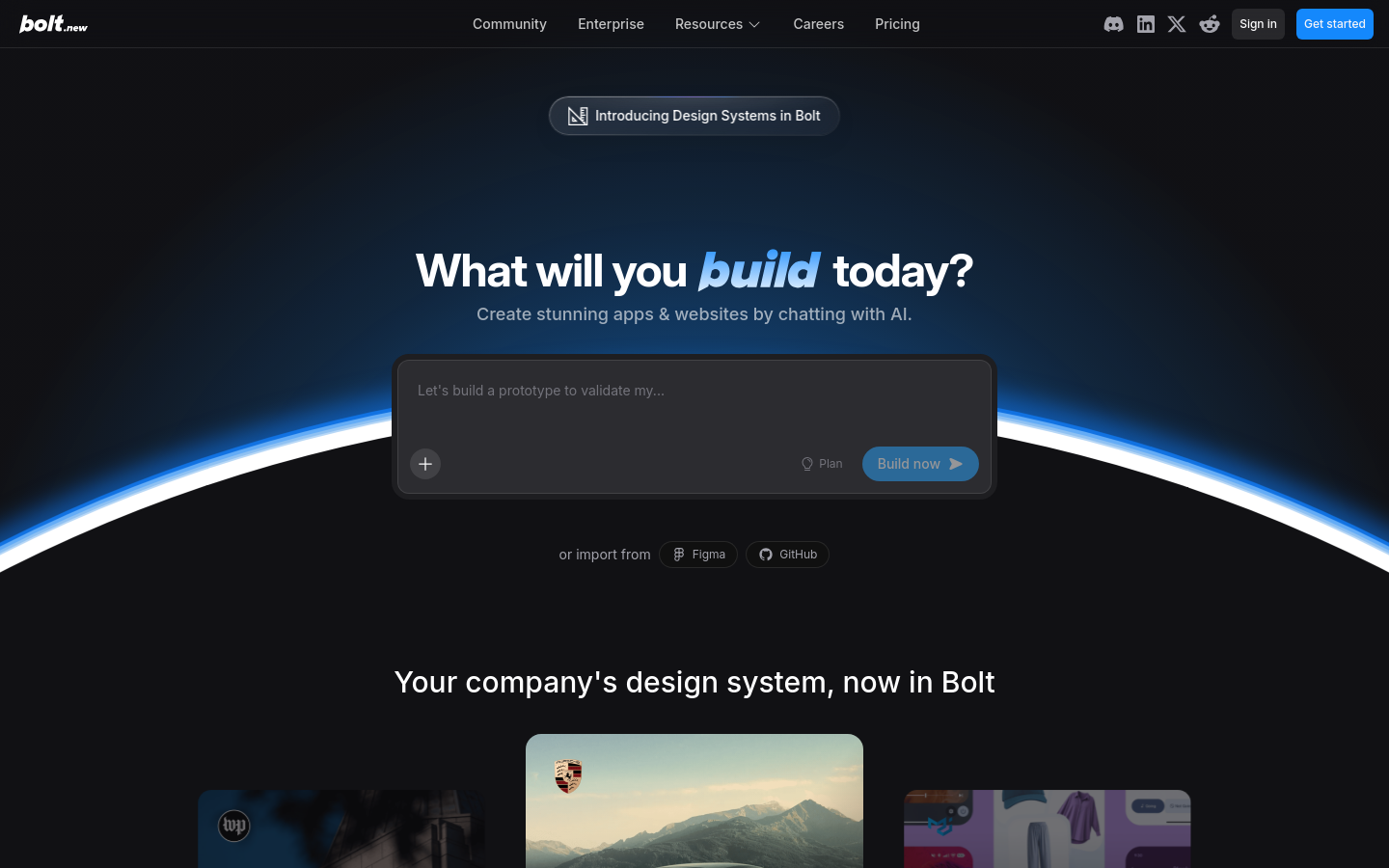

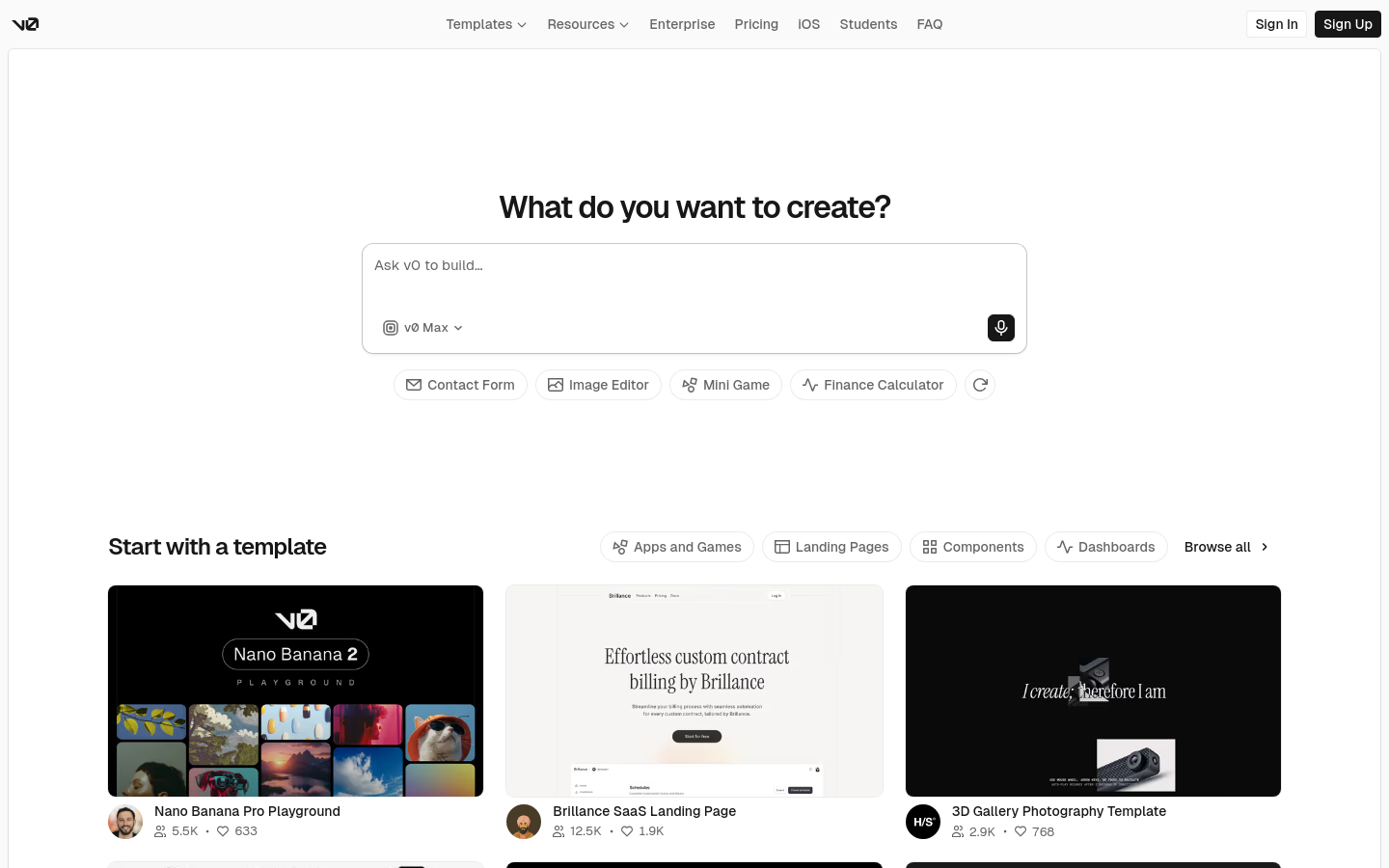

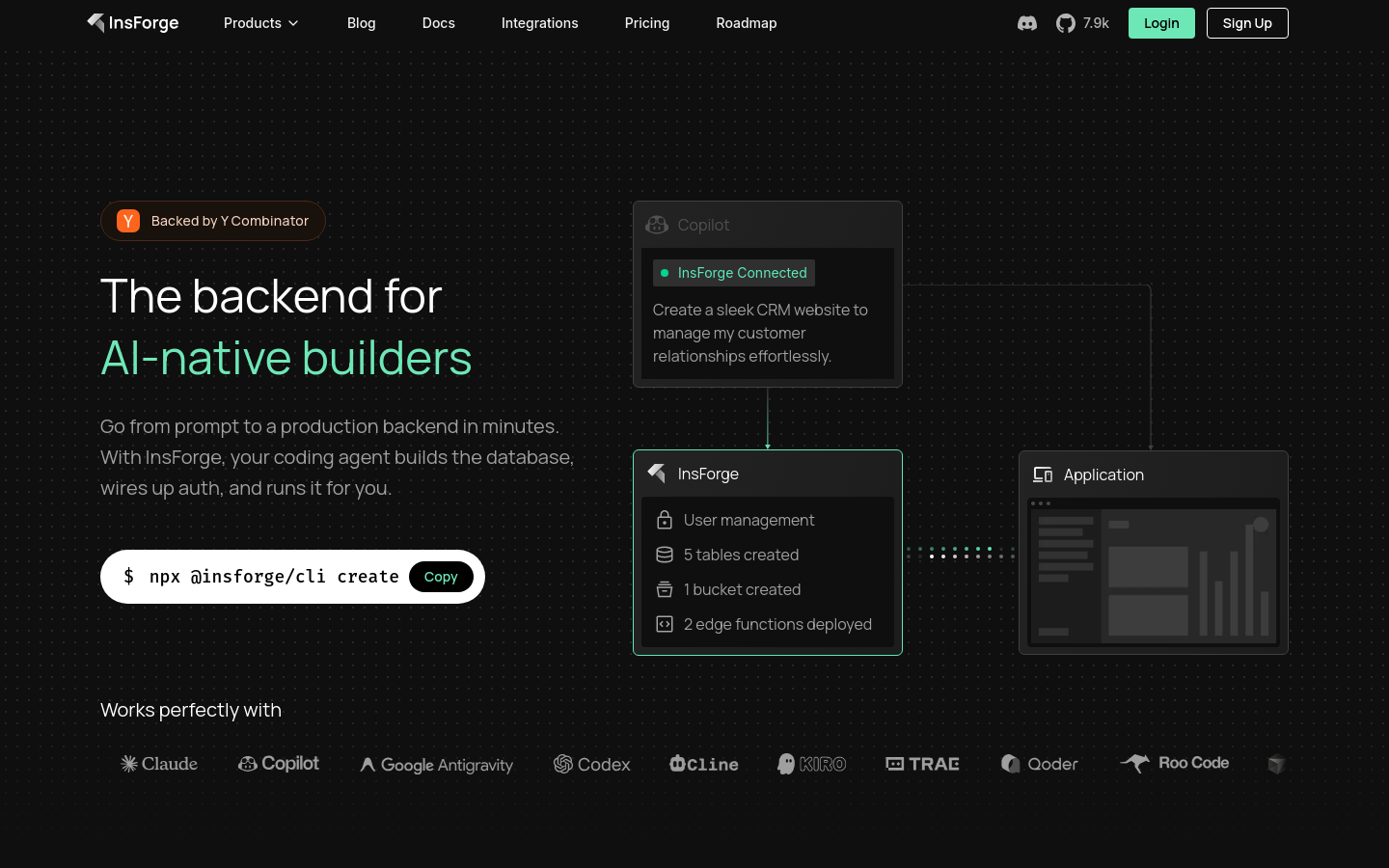

AngelSlim Screenshots & Demo

What is AngelSlim

AngelSlim is a large language model compression toolkit designed to help developers efficiently deploy and compress large language models. By leveraging a range of compression algorithms and techniques, AngelSlim reduces memory usage and improves deployment efficiency. This open-source toolkit is particularly useful for machine learning engineers and researchers working with large language models, enabling them to deploy models on devices with limited memory and computational resources. With AngelSlim, developers can compress and deploy large language models for applications such as voice assistants, chatbots, and language translation. By using AngelSlim, developers can reduce the memory and computational requirements of their models, improving deployment efficiency and reducing costs. AngelSlim's compression algorithms and techniques, such as quantization, speculative decoding, pruning, and distillation, help developers achieve efficient model deployment. By following the streamlined workflow provided by AngelSlim, developers can easily compress and deploy their large language models, making it an essential tool for any machine learning team.

AngelSlim 's Core features

Quantization

A compression algorithm that reduces the precision of model weights to reduce memory usage and improve deployment efficiency

Speculative Decoding

A technique that predicts and fills in missing values in the model, reducing the need for explicit pruning and improving compression efficiency

Pruning

A technique that removes unnecessary model weights to reduce memory usage and improve deployment efficiency

Distillation

A technique that transfers knowledge from a large model to a smaller model, reducing the need for large models and improving deployment efficiency

How to use AngelSlim

- Visit the AngelSlim documentation website at https://angelslim.readthedocs.io

- Browse the available compression algorithms and choose the one that suits your needs

- Follow the installation tutorial to set up AngelSlim on your system

- Use the toolkit to compress and deploy your large language models

Use cases of AngelSlim

Deploying large language models on resource-constrained devices

AngelSlim helps developers compress and deploy large language models on devices with limited memory and computational resources, enabling applications such as voice assistants, chatbots, and language translation

Improving model deployment efficiency

AngelSlim's compression algorithms and techniques help developers reduce the memory and computational requirements of large language models, improving deployment efficiency and reducing costs

Who benefits from AngelSlim

Machine learning engineers and researchers

AngelSlim is designed for developers who work with large language models and want to improve their deployment efficiency and reduce costs

Pricing of AngelSlim

Open-source, free to use and distribute