The GenAI evaluation and observability platform

GenAI evaluation & observability platform

Freemium

Support platforms

web

What is The GenAI evaluation and observability platform

Maxim is a platform designed for evaluating and observing Generative AI applications. It provides tools for comprehensive testing, performance monitoring, and debugging of AI models. Unlike generic monitoring solutions, Maxim focuses specifically on the unique challenges of GenAI, offering features like prompt-based testing, output quality assessment, and model behavior analysis. The platform leverages advanced techniques for automated evaluation and provides detailed insights into model performance, including latency, accuracy, and cost. Maxim benefits AI engineers, ML researchers, and product managers by streamlining the development and deployment of reliable and high-performing GenAI applications. It helps users identify and resolve issues, optimize model performance, and ensure the quality of AI-driven products.

The GenAI evaluation and observability platform 's Core features

Automated Evaluation Pipelines

Maxim automates the process of evaluating GenAI models by allowing users to define and execute comprehensive test suites. This includes support for various evaluation metrics such as accuracy, relevance, and toxicity. Users can configure pipelines to run tests on a schedule or trigger them based on events, ensuring continuous monitoring and rapid identification of performance regressions. This feature reduces manual effort and improves the efficiency of model validation.

Prompt-Based Testing

Maxim provides advanced prompt-based testing capabilities, allowing users to evaluate GenAI models' responses to various prompts and inputs. Users can create and manage prompt libraries, test different prompt variations, and analyze the impact of prompt engineering on model outputs. This feature is crucial for understanding how models behave under different conditions and for optimizing prompts to achieve desired results. It supports A/B testing of prompts.

Output Quality Assessment

The platform offers tools for assessing the quality of GenAI model outputs, including metrics for fluency, coherence, and factual accuracy. Maxim supports both automated and human-in-the-loop evaluation methods, enabling users to combine the speed of automated testing with the nuanced judgment of human reviewers. This ensures that the outputs meet the required quality standards and are aligned with the intended use case.

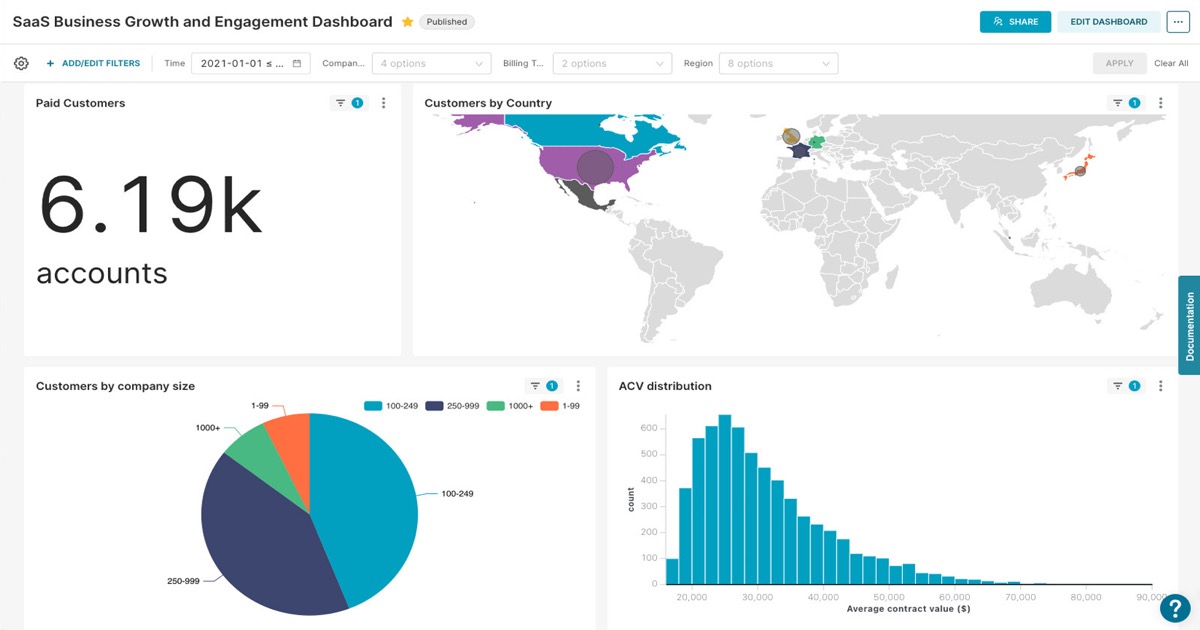

Real-time Observability Dashboard

Maxim's observability dashboard provides real-time monitoring of GenAI applications, displaying key performance indicators (KPIs) such as latency, error rates, and cost. The dashboard allows users to track model performance over time, identify anomalies, and troubleshoot issues quickly. It integrates with various logging and monitoring tools, providing a unified view of the application's health and performance.

Model Behavior Analysis

Maxim offers tools for analyzing the behavior of GenAI models, including identifying biases, understanding model decision-making processes, and detecting potential vulnerabilities. Users can use these tools to gain insights into how models generate outputs and to ensure that they are aligned with ethical guidelines and regulatory requirements. This feature is important for building trustworthy and responsible AI applications.

Collaboration and Reporting

Maxim facilitates collaboration among team members by allowing users to share evaluation results, dashboards, and reports. The platform supports role-based access control, ensuring that sensitive data is protected. Users can generate custom reports to communicate findings to stakeholders, track progress over time, and demonstrate the value of their GenAI applications. This feature improves team communication and decision-making.

How to use The GenAI evaluation and observability platform

- Sign up for a free account on the Maxim website.,2. Integrate the Maxim SDK into your GenAI application (supports Python, JavaScript, and more).,3. Define evaluation metrics and test cases relevant to your application's goals (e.g., accuracy, fluency, coherence).,4. Run evaluations to assess model performance against your defined metrics, generating reports and insights.,5. Monitor your GenAI application's performance in real-time using Maxim's observability dashboard.,6. Analyze the results, identify areas for improvement, and iterate on your model or prompts.

Use cases of The GenAI evaluation and observability platform

Evaluating LLM Performance

AI engineers use Maxim to evaluate the performance of different LLMs (e.g., GPT-3, Llama) for specific tasks, such as text generation, summarization, or question answering. They define test cases, measure accuracy, and compare the results to choose the best model for their application, optimizing for both performance and cost.

Monitoring Chatbot Quality

Product managers use Maxim to monitor the quality of a customer service chatbot. They set up automated tests to assess the chatbot's ability to answer customer questions accurately and efficiently. The platform provides real-time insights into the chatbot's performance, allowing them to quickly identify and fix issues.

Detecting Bias in AI Models

Researchers use Maxim to analyze GenAI models for bias. They create test cases that expose potential biases in the model's outputs. Maxim helps them identify and quantify these biases, enabling them to take corrective actions to improve fairness and ethical considerations.

Optimizing Prompt Engineering

Prompt engineers use Maxim to A/B test different prompts for a text generation model. They measure the impact of each prompt on the model's output quality, such as relevance and coherence. This helps them identify the most effective prompts for their specific use case, improving the overall performance of the model.

Who benefits from The GenAI evaluation and observability platform

AI Engineers

AI engineers need Maxim to evaluate, monitor, and debug GenAI models, ensuring they meet performance and quality standards. The platform streamlines the development process, enabling engineers to iterate faster and deploy reliable AI applications.

ML Researchers

ML researchers use Maxim to analyze model behavior, identify biases, and conduct experiments. The platform provides tools for in-depth evaluation and reporting, helping researchers gain insights into model performance and improve their research outcomes.

Product Managers

Product managers leverage Maxim to monitor the performance of GenAI-powered features and products. They use the platform to track key metrics, identify issues, and ensure that the AI components meet user expectations and business goals.

Prompt Engineers

Prompt engineers utilize Maxim to test and optimize prompts for various GenAI models. The platform allows them to A/B test different prompts, measure their impact on model outputs, and refine prompts to achieve desired results, improving the overall effectiveness of the AI applications.

Pricing of The GenAI evaluation and observability platform

Free plan available. Contact sales for custom pricing and enterprise plans.