One API

Unified API for LLM access.

Freemium

Support platforms

web

What is One API

One API provides a unified interface for accessing various Large Language Models (LLMs). It simplifies the process of integrating different LLMs into applications by abstracting away the complexities of individual API endpoints and authentication methods. This allows developers to easily switch between models, manage costs, and implement features like load balancing and rate limiting. Unlike direct API integrations, One API offers centralized management, monitoring, and version control. It uses a proxy architecture to route requests to the appropriate LLM provider, supporting models from OpenAI, Cohere, and others. This is particularly beneficial for developers, researchers, and businesses seeking to build AI-powered applications without being locked into a single LLM provider.

One API 's Core features

Unified API Interface

Offers a single, consistent API endpoint for accessing multiple LLMs. This simplifies integration and reduces the code needed to switch between models. For example, a developer can swap from GPT-3.5 to GPT-4 with a simple configuration change, without modifying the core application logic. This abstraction layer also handles differences in request formats and response structures.

Model Switching & Routing

Enables easy switching between different LLMs based on performance, cost, or availability. It intelligently routes requests to the most suitable model, optimizing for factors like latency and token pricing. This allows for A/B testing of different models and dynamic allocation of resources. The routing logic can be customized based on various criteria, such as request size or user location.

Cost Management & Optimization

Provides tools for monitoring and controlling LLM usage costs. Features include cost tracking, budgeting, and rate limiting. Users can set spending limits and receive alerts when they approach their budget. The platform also offers features like intelligent caching and request batching to reduce costs. Detailed analytics provide insights into model usage patterns.

Centralized Authentication

Simplifies authentication by managing API keys and access credentials for all supported LLMs in a single place. This reduces the risk of exposing sensitive information and streamlines the authentication process. It supports various authentication methods, including API keys, OAuth, and custom authentication schemes. Securely stores and manages all API keys.

Monitoring and Analytics

Offers real-time monitoring of API usage, performance metrics, and error rates. Provides detailed analytics dashboards to track request volume, latency, and cost per model. This allows users to identify bottlenecks, optimize performance, and troubleshoot issues quickly. Customizable dashboards and alerting features are available.

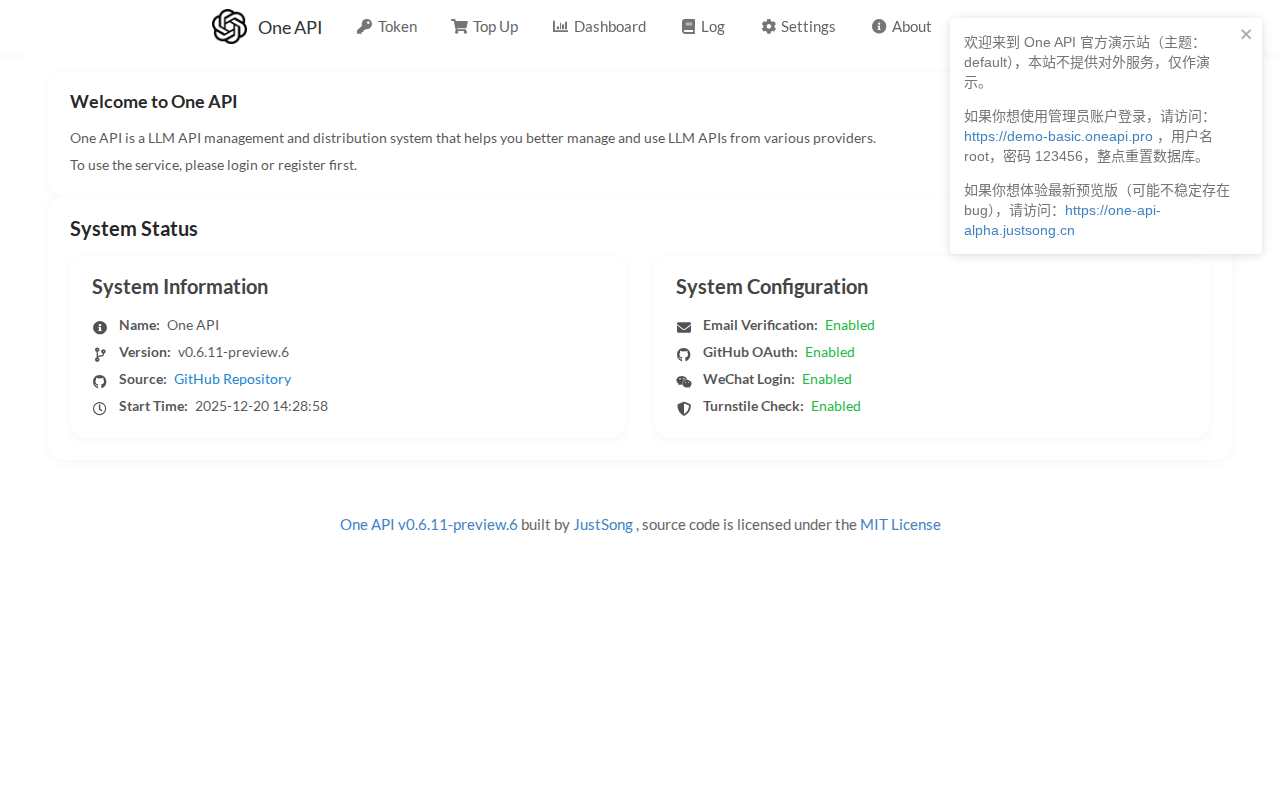

How to use One API

Unfortunately, the provided URL only shows a placeholder. Without more information, a detailed how-to-use guide cannot be created. However, based on the product description, the general steps would likely involve: 1. Sign up for an account. 2. Obtain API keys for the desired LLM providers (e.g., OpenAI). 3. Configure the One API platform with your API keys. 4. Use the One API endpoints in your application to make requests to the LLMs. 5. Monitor usage and manage costs through the One API dashboard.

Use cases of One API

AI Chatbot Development

Developers building chatbots can use One API to access various LLMs like GPT-3.5 or GPT-4. They can easily switch between models to optimize for response quality, cost, or latency. This allows for rapid prototyping and A/B testing of different LLM configurations, leading to a better user experience.

Content Generation

Content creators can leverage One API to generate articles, summaries, and social media posts using different LLMs. They can compare the outputs of various models and choose the best fit for their needs. This streamlines the content creation process and allows for experimentation with different writing styles.

Application Integration

Software developers can integrate LLMs into their applications to provide features like natural language processing, text summarization, and sentiment analysis. One API simplifies the integration process by providing a unified interface, reducing development time and complexity. This allows developers to focus on building features rather than managing API integrations.

Research and Experimentation

Researchers can use One API to experiment with different LLMs and compare their performance on various tasks. They can easily switch between models, track usage metrics, and analyze the results. This facilitates research on LLM capabilities and helps identify the best models for specific applications.

Who benefits from One API

AI Application Developers

Developers building applications that utilize LLMs. They need a simplified way to access and manage multiple LLMs without dealing with the complexities of individual API integrations, authentication, and cost management.

Researchers and Data Scientists

Researchers and data scientists who need to experiment with different LLMs for their projects. They benefit from the ability to easily switch between models, track performance metrics, and manage costs effectively.

Businesses and Startups

Businesses and startups looking to integrate AI into their products or services. They need a cost-effective and scalable solution for accessing and managing LLMs, allowing them to focus on their core business.

Enterprises

Large enterprises that need to manage multiple LLMs across different teams and projects. They require centralized control, cost optimization, and robust monitoring capabilities to ensure efficient and secure LLM usage.

Pricing of One API

Pricing details are not available from the provided URL. It is likely a freemium model with a free tier for basic usage, and paid plans for higher request limits and advanced features. Contact the vendor for specific pricing.