LlamaIndex

AI Framework for Document LLMs

Freemium

Support platforms

web

Tool tags

What is LlamaIndex

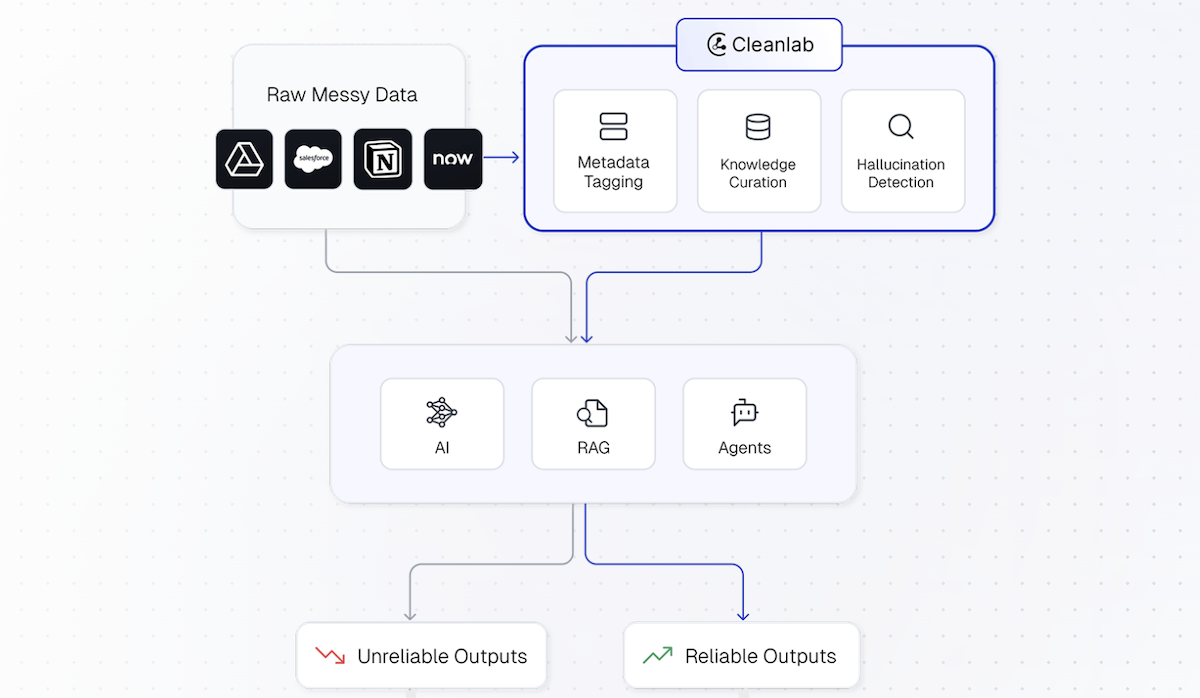

LlamaIndex is a data framework for LLM applications, designed to simplify the process of connecting custom data sources to large language models. It enables developers to build powerful applications like chatbots, question-answering systems, and data analysis tools by providing tools for data ingestion, structuring, and access. Unlike generic LLM wrappers, LlamaIndex focuses on data-centric workflows, offering features such as data connectors for various formats (PDFs, APIs, databases), indexing strategies (e.g., vector stores), and query interfaces. This allows for efficient retrieval and reasoning over complex data, making it ideal for developers seeking to leverage LLMs with their own datasets. It is particularly useful for building Retrieval-Augmented Generation (RAG) applications.

LlamaIndex 's Core features

Data Connectors

LlamaIndex provides a wide array of data connectors to ingest data from various sources, including PDFs, websites, APIs, databases (e.g., SQL, NoSQL), and cloud storage services (e.g., AWS S3, Google Cloud Storage). This allows users to easily integrate their existing data into LLM applications without manual data preparation. Supports over 100 different data sources, with new connectors added frequently.

Indexing Strategies

Offers multiple indexing strategies to structure data for efficient retrieval. These include vector stores (e.g., ChromaDB, Pinecone, Weaviate), tree-based indexes, and keyword tables. Users can select the optimal indexing method based on their data characteristics and query requirements. The choice of index significantly impacts retrieval speed and relevance, with vector stores being particularly effective for semantic search.

Query Interfaces

Provides flexible query interfaces to interact with the indexed data. Users can create query engines that support various query types, such as keyword search, semantic search, and hybrid search. Advanced features include support for multi-step reasoning, summarization, and the ability to integrate with external tools and APIs. Supports both synchronous and asynchronous query execution.

RAG Pipelines

LlamaIndex simplifies the construction of Retrieval-Augmented Generation (RAG) pipelines. It offers pre-built components and utilities for data retrieval, context augmentation, and response generation. This streamlines the development of applications that leverage LLMs to answer questions based on specific documents or datasets, improving accuracy and reducing hallucinations. Provides built-in support for integrating with LLMs like OpenAI's GPT models.

Customization & Extensibility

The framework is designed to be highly customizable and extensible. Developers can modify existing components or create their own custom modules to tailor the system to their specific needs. This includes the ability to define custom data connectors, indexing strategies, query engines, and response generation modules. Supports integration with LangChain and other popular LLM frameworks.

Evaluation Framework

Includes a built-in evaluation framework to assess the performance of RAG pipelines and other LLM applications. This allows users to measure the accuracy, relevance, and efficiency of their systems. Metrics include faithfulness, context relevance, and answer similarity. Supports automated evaluation using various datasets and evaluation metrics.

How to use LlamaIndex

- Install the LlamaIndex Python package using pip:

pip install llama-index.,2. Choose a data connector to load your data. For example, useSimpleDirectoryReaderto load documents from a directory:from llama_index import SimpleDirectoryReader; documents = SimpleDirectoryReader(input_dir="./data").load_data().,3. Build an index over your documents. UseVectorStoreIndexfor semantic search:from llama_index import VectorStoreIndex; index = VectorStoreIndex.from_documents(documents).,4. Create a query engine to interact with the index:query_engine = index.as_query_engine().,5. Query the index using the query engine:response = query_engine.query("What is the document about?").,6. Customize the index and query engine with different parameters and settings to optimize performance and accuracy.

Use cases of LlamaIndex

Document Question Answering

A legal firm uses LlamaIndex to build a system that answers questions about legal documents. Lawyers can upload contracts and case files, and the system quickly retrieves relevant information to answer complex legal queries, saving time and improving accuracy. The system leverages semantic search to understand the context of the questions.

Enterprise Knowledge Management

A company uses LlamaIndex to create a searchable knowledge base from internal documents, wikis, and manuals. Employees can easily find answers to their questions and access relevant information, improving productivity and reducing reliance on manual searches. The system supports multiple data formats and integrates with existing enterprise systems.

Chatbot for Customer Support

A software company integrates LlamaIndex into its customer support chatbot. The chatbot can access and retrieve information from product documentation, FAQs, and support tickets to provide accurate and helpful responses to customer inquiries, improving customer satisfaction and reducing support costs. The chatbot uses RAG to provide up-to-date information.

Research and Analysis

Researchers use LlamaIndex to analyze large datasets of scientific papers and reports. They can quickly extract key insights, identify relevant information, and generate summaries, accelerating the research process and enabling more efficient literature reviews. The system supports advanced search and filtering capabilities.

Who benefits from LlamaIndex

AI Developers

AI developers benefit from LlamaIndex by accelerating the development of LLM-powered applications. It provides pre-built components and tools to streamline data ingestion, indexing, and querying, reducing development time and complexity. It allows developers to focus on building innovative solutions rather than low-level infrastructure.

Data Scientists

Data scientists can leverage LlamaIndex to build and deploy LLM-based solutions for data analysis and knowledge discovery. The framework simplifies the process of integrating data from various sources, building indexes, and querying data, enabling data scientists to extract valuable insights from their datasets more efficiently.

Software Engineers

Software engineers can use LlamaIndex to integrate LLM capabilities into their applications. The framework provides a flexible and extensible platform for building intelligent features such as chatbots, question-answering systems, and data analysis tools, enhancing the functionality and user experience of their software products.

Researchers

Researchers can utilize LlamaIndex to build and test LLM-based applications for their research. The framework offers tools for data ingestion, indexing, and querying, enabling researchers to quickly prototype and evaluate different approaches to their research problems. It also supports integration with various LLMs and evaluation metrics.

Pricing of LlamaIndex

Open Source (Apache 2.0). LlamaIndex Cloud: Free tier (limited usage), Paid plans with increased limits and features, and Enterprise options with custom pricing.