Cleanlab

Real-time LLM response trust scores

Paid

Support platforms

web

What is Cleanlab

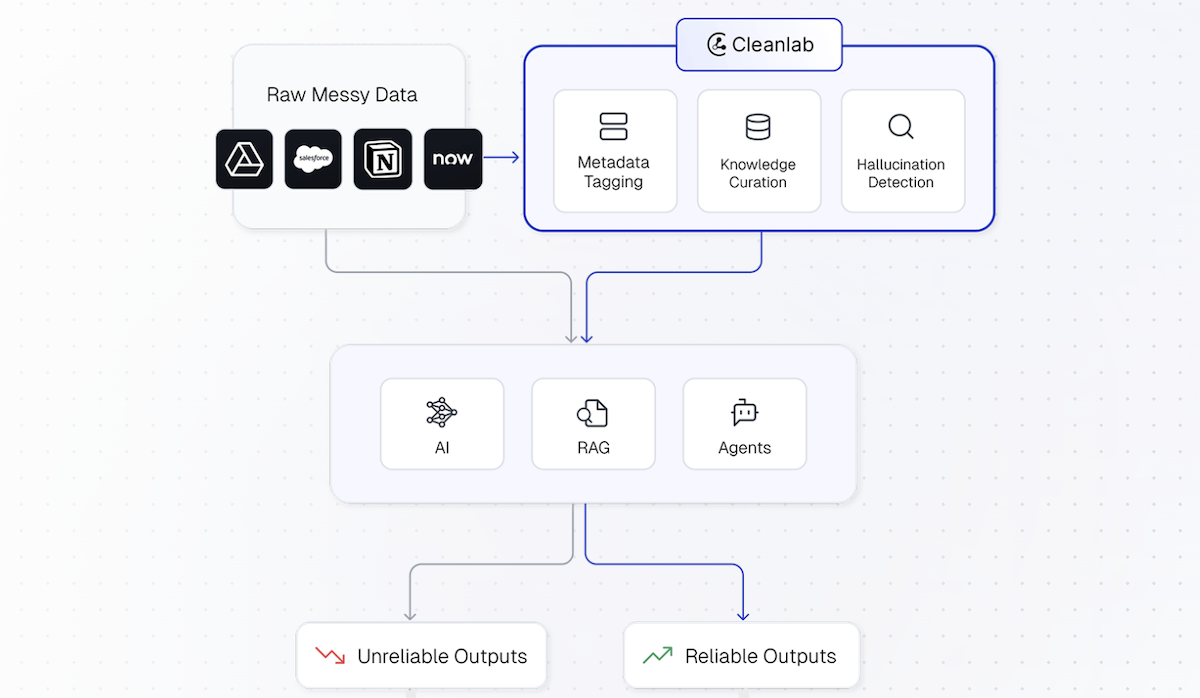

Cleanlab's Trustworthy Language Model (TLM) provides real-time trustworthiness scores for Large Language Model (LLM) outputs, mitigating the risk of hallucinations and incorrect answers. It helps users identify reliable LLM responses, ensuring the accuracy and dependability of AI-driven applications. Unlike generic LLM evaluation tools, TLM focuses on real-time scoring, integrating seamlessly into existing workflows. It leverages advanced algorithms to assess the trustworthiness of LLM outputs, providing actionable insights for improving AI reliability. TLM is ideal for businesses and developers seeking to build trustworthy AI solutions, such as chatbots, data extraction tools, and agent-based systems. It empowers users to make informed decisions based on the reliability of LLM responses, enhancing user experience and business outcomes.

Cleanlab 's Core features

Real-time Trust Scoring

TLM provides immediate trustworthiness scores for LLM outputs, allowing for dynamic decision-making during runtime. This contrasts with batch processing methods, enabling immediate identification of unreliable responses. The scoring is based on a proprietary algorithm that analyzes various factors, including the LLM's confidence, the consistency of the response, and the presence of factual errors. This real-time capability is crucial for applications where immediate accuracy is paramount.

Hallucination Detection

TLM is designed to detect and flag LLM-generated hallucinations, which are incorrect or fabricated responses. It utilizes advanced techniques to identify inconsistencies and factual inaccuracies within the LLM's output. This feature is critical for applications where accuracy is paramount, such as medical diagnosis or financial analysis, where incorrect information can have serious consequences. The system provides a confidence score indicating the likelihood of a hallucination.

Customizable Evaluation Criteria

TLM allows users to define custom evaluation criteria tailored to their specific use cases and data. This flexibility enables users to adapt the trustworthiness scoring to their specific needs, ensuring that the system aligns with their unique requirements. Users can specify the types of errors to prioritize and the acceptable levels of risk. This customization is essential for optimizing the performance of LLM applications in diverse domains.

Integration with Various LLMs

TLM supports integration with a wide range of LLMs, including OpenAI models and others, providing broad compatibility. This allows users to apply TLM's trustworthiness scoring capabilities regardless of their chosen LLM provider. The system is designed to be adaptable to different LLM architectures and output formats, ensuring seamless integration. This flexibility simplifies the process of incorporating TLM into existing AI workflows.

Use Case Specific Solutions

Cleanlab offers pre-built solutions and guides for various use cases, such as trustworthy RAG chatbots, data extraction, and agent-based systems. These solutions provide a starting point for implementing TLM in specific applications, streamlining the development process. The guides offer best practices and examples for integrating TLM into different workflows. This targeted approach helps users quickly deploy and benefit from TLM's capabilities.

How to use Cleanlab

- Access the Cleanlab TLM documentation and familiarize yourself with the available APIs and SDKs.,2. Integrate the TLM API into your existing LLM-based application or workflow.,3. Send LLM responses to the TLM API for real-time trustworthiness scoring.,4. Analyze the returned trust scores to identify potentially unreliable LLM outputs.,5. Implement strategies to handle low-trust scores, such as rephrasing prompts, cross-validating responses, or providing alternative answers.,6. Continuously monitor and refine your LLM application based on TLM's insights to improve accuracy and reliability.

Use cases of Cleanlab

Trustworthy Chatbots

Developers can use TLM to build chatbots that provide reliable and accurate information by scoring the trustworthiness of each response. This ensures that the chatbot avoids generating incorrect or misleading answers, improving user trust and satisfaction. For example, a customer service chatbot can use TLM to verify the accuracy of its responses before providing them to a user.

Data Extraction

TLM can be used to improve the accuracy of data extraction from unstructured text. By scoring the trustworthiness of extracted information, users can identify and correct errors, ensuring data quality. For example, a company can use TLM to extract key information from contracts, verifying the accuracy of the extracted data before using it.

Agent-Based Systems

TLM can be integrated into agent-based systems to ensure the reliability of the agents' actions and decisions. By scoring the trustworthiness of the agents' outputs, developers can prevent agents from taking actions based on incorrect information. For example, a financial trading agent can use TLM to verify the accuracy of market data before making trades.

Yes/No Decision Making

TLM can be applied to improve the accuracy of yes/no decision-making processes. By scoring the trustworthiness of the LLM's responses, users can make more informed decisions based on reliable information. For example, a medical diagnostic tool can use TLM to assess the trustworthiness of the LLM's diagnosis before providing it to a doctor.

Who benefits from Cleanlab

AI Developers

AI developers benefit from TLM by gaining a tool to improve the reliability and accuracy of their LLM-based applications. They can use TLM to identify and mitigate the risks associated with LLM hallucinations, ensuring that their applications provide trustworthy information and deliver a better user experience.

Data Scientists

Data scientists can leverage TLM to enhance the quality of data extracted from LLMs. By scoring the trustworthiness of LLM outputs, data scientists can improve the accuracy of their datasets and models, leading to more reliable insights and better decision-making. This is especially useful for tasks like data annotation and information retrieval.

Business Leaders

Business leaders can use TLM to build trust in their AI-powered products and services. By ensuring the reliability of LLM responses, they can improve customer satisfaction, reduce the risk of misinformation, and gain a competitive advantage. This is crucial for applications that involve sensitive information or critical decision-making.

Pricing of Cleanlab

Pricing details not available on the provided documentation. Please visit the Cleanlab website for current pricing plans.